3D-Belief: Embodied Belief Inference via Generative 3D World Modeling

3D-Belief: Embodied Belief Inference via Generative 3D World Modeling

Recent visual generative models have advanced world modeling, but most focus on novel-view synthesis or future-frame prediction rather than the structured uncertainty needed for embodied agents under partial observability. We propose world modeling as embodied belief inference in 3D space and introduce 3D-Belief, a generative 3D world model that maintains, samples, and updates explicit 3D beliefs from partial observations. By representing uncertainty directly in 3D, 3D-Belief enables spatially consistent memory, plausible scene completion, and semantic reasoning over unseen regions. Evaluations on 2D visual belief, our 3D-CORE benchmark, and object navigation in simulation and the real world show that 3D-Belief improves 2D/3D imagination quality and downstream embodied task performance over state-of-the-art methods.

Explore interactive 3D-Belief predictions from two demo scenes. Each viewer shows the observed 3D scene directly, with a reveal slider for moving from observed geometry to imagined regions. For more examples, explore the interactive demo gallery.

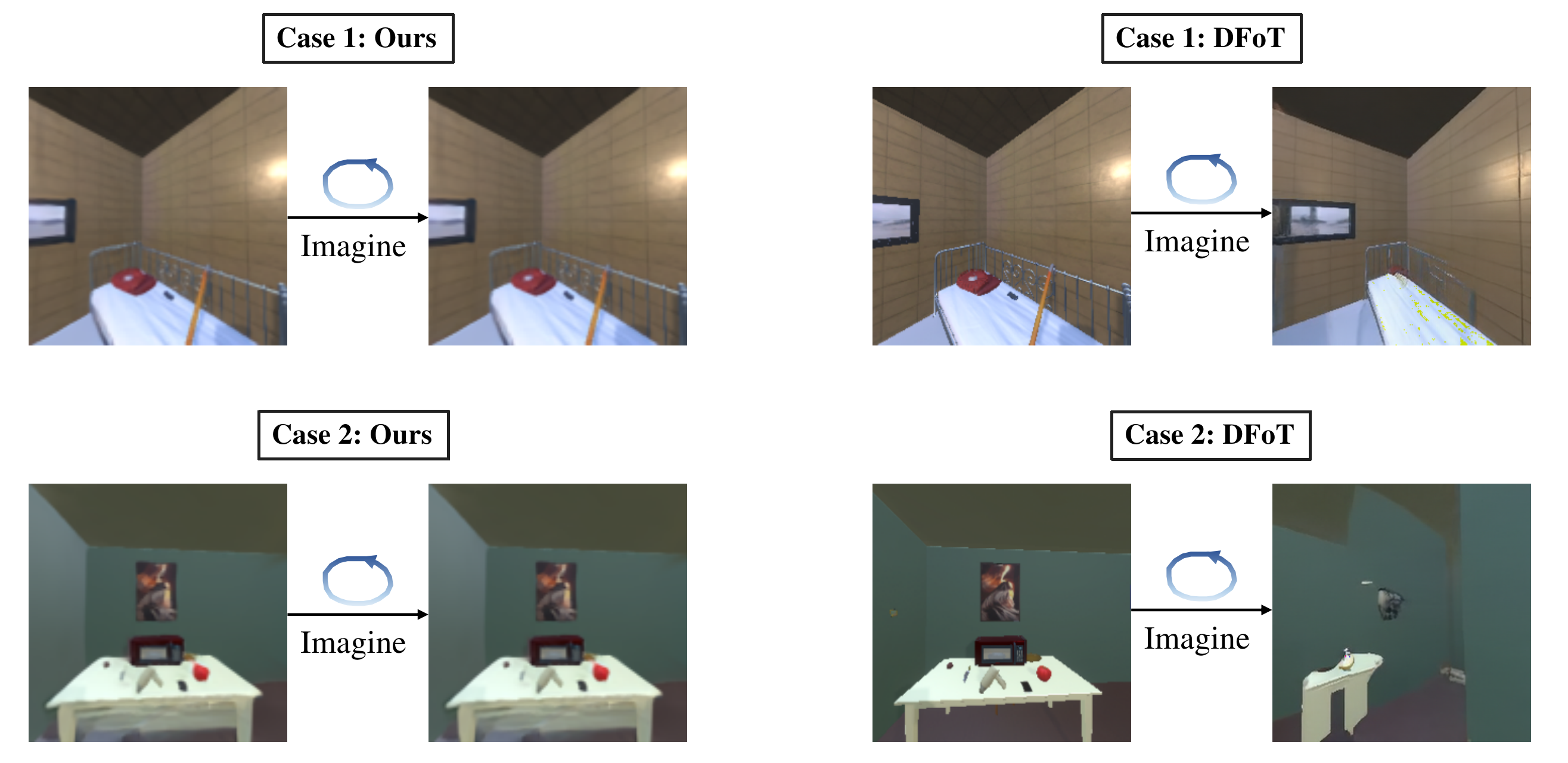

We compare the visual quality of 3D-Belief's predictions against state-of-the-art generative world models on unseen future viewpoints. Each panel shows, from left to right: Ground Truth, 3D-Belief (Ours), DFoT, and Gen3C (RE10K) / NWM (AI2-THOR). 3D-Belief produces predictions that are more spatially consistent and more faithful to the ground truth than the baselines.

We introduce 3D-CORE (3D COntextual REasoning), a benchmark for evaluating whether 3D world models learn the kinds of belief reasoning that are directly required for embodied decision-making. 3D-CORE is designed to probe the capabilities that matter for downstream embodied planning directly: (1) spatial expansion beyond what is currently observed, (2) semantic reasoning grounded in 3D structure, and (3) long-horizon consistency under large viewpoint changes.

We use 3D-Belief as a mental 3D world model for navigation under partial observability. At each step, the agent updates its belief from egocentric RGB history and camera poses, samples multiple plausible 3D beliefs, imagines observations along candidate paths, and selects the rollout with the strongest open-vocabulary goal progress under uncertainty.

We evaluate 3D-Belief on open-vocabulary object navigation tasks in the AI2-THOR simulator, where an agent must find a specified target object in a previously unseen environment.

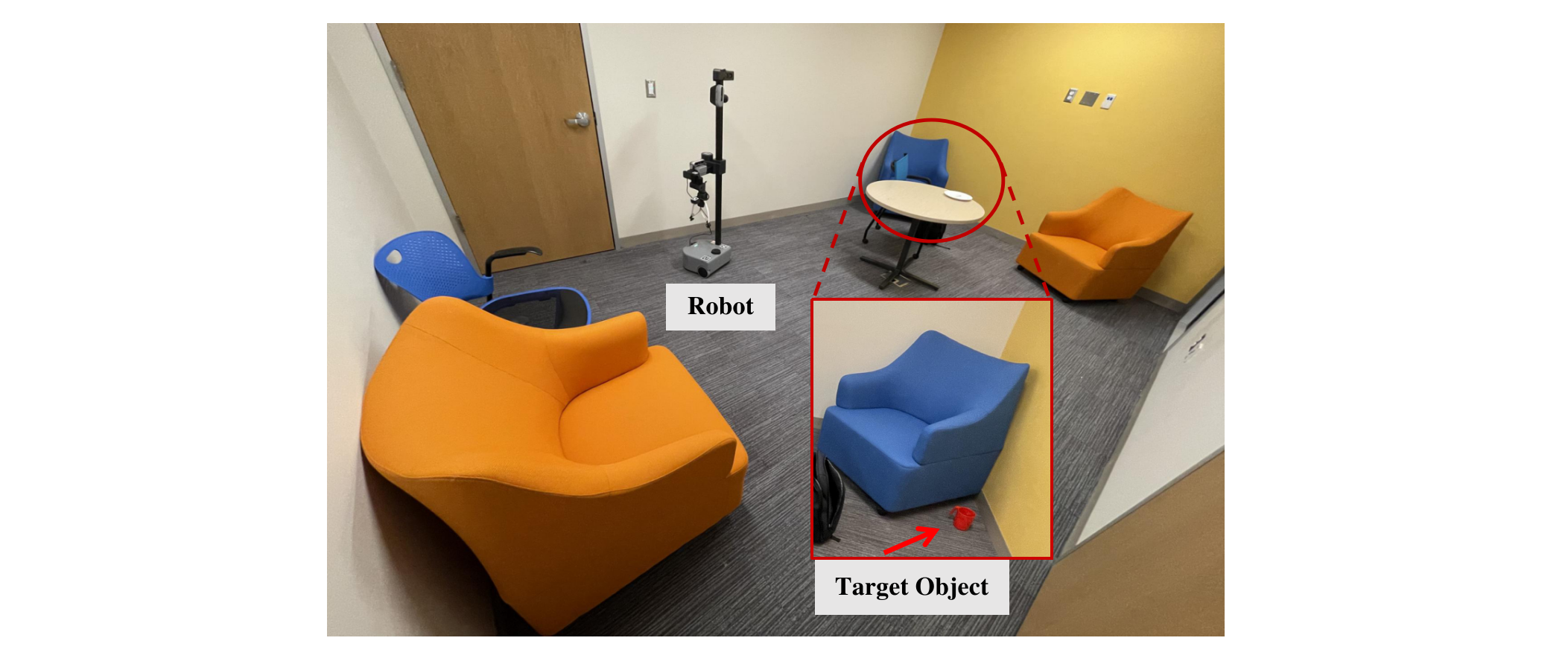

We evaluate 3D-Belief on a real mobile manipulation platform (Hello Robot Stretch) in a mock apartment environment. Note that the environments, objects, and target descriptions are all unseen during training, making this a real-world open-vocabulary object navigation setting.

Open-Vocabulary Object Navigation: Search for a mug.

3D-Belief (Success)

Gemini-3.0 Agent (Failed - Collision)

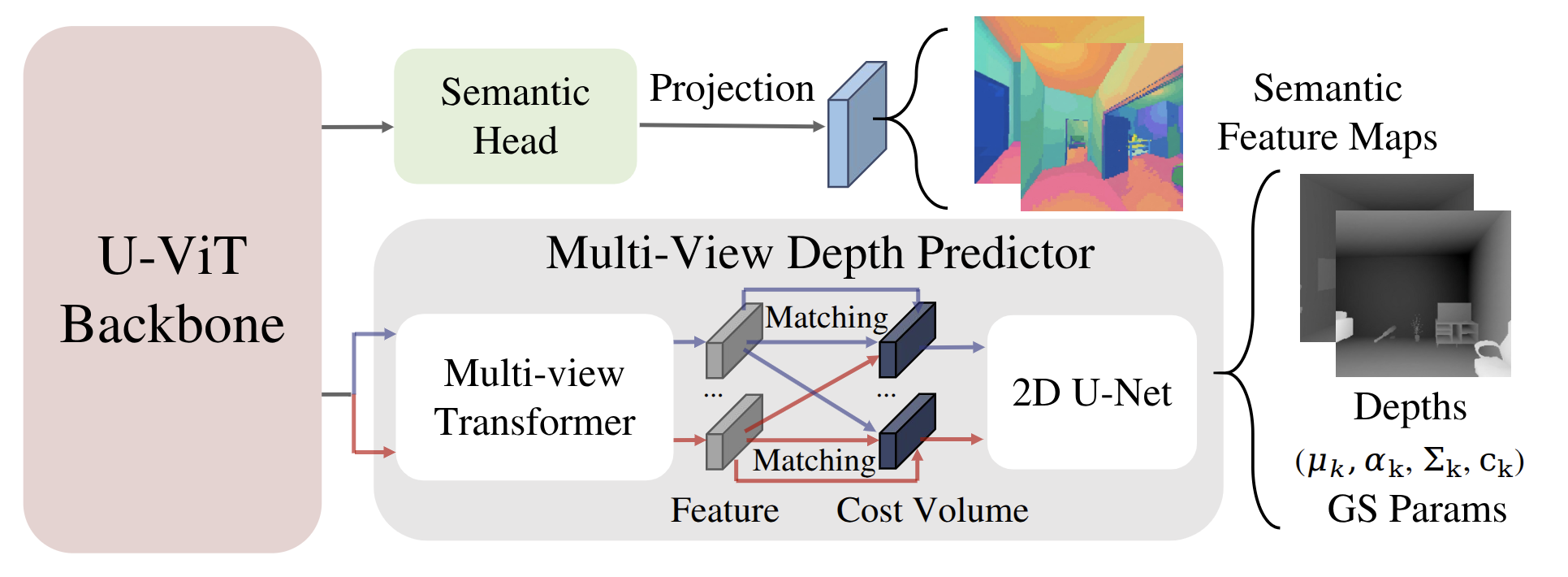

3D-Belief is a diffusion-based generative model with a shared U-ViT backbone and two heads that jointly predict an explicit 3D Gaussian Splatting scene together with distilled CLIP-style semantics. An MVS-style predictor with a multi-view Transformer and a cost-volume module guides depth and geometry, yielding per-primitive Gaussians (mean, covariance, opacity, appearance, semantic embedding) for both observed and imagined regions. Differentiable splatting then renders RGB, depth, and open-vocabulary semantic maps from any viewpoint.

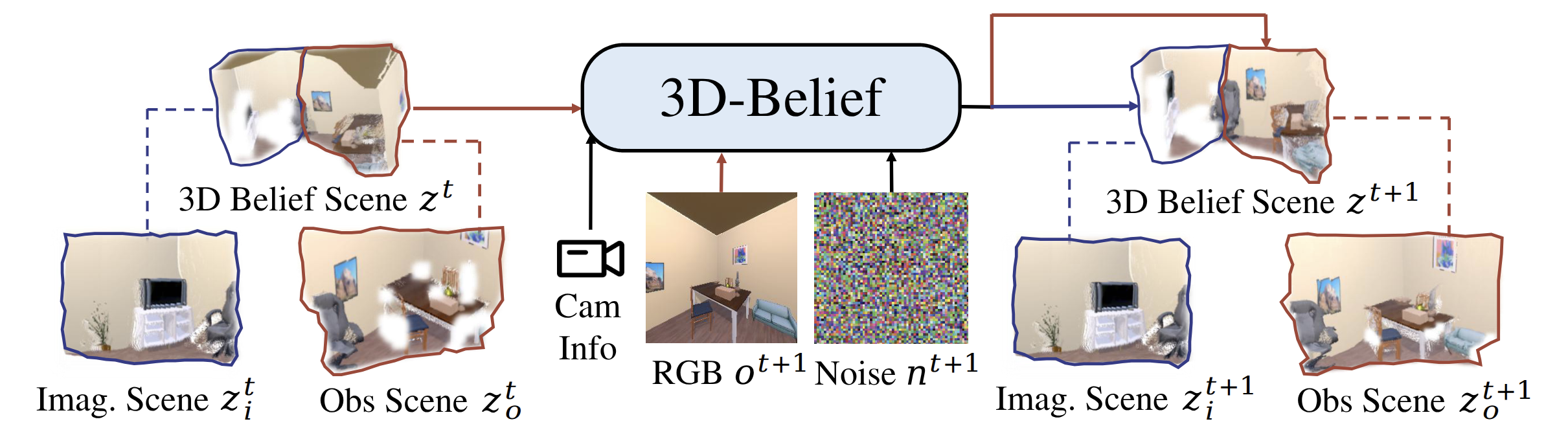

We reformulate POMDP belief tracking as an autoregressive update over explicit 3D representations. As each new observation and camera pose arrives, the observed Gaussians are expanded to incorporate the new evidence, while the previously imagined regions are resampled into a fresh imagination consistent with what is now seen. This keeps a spatially consistent 3D scene memory while continually revising beliefs about unobserved space to support downstream embodied decisions.

In this work, we introduce embodied belief inference via generative 3D world modeling and identify key capabilities for practical 3D belief modeling. We propose 3D-Belief, which predicts unseen regions as an explicit 3D representation from partial observations and updates this belief online. Experiments on 2D visual quality, contextual reasoning, and object navigation show that 3D-Belief improves 2D/3D scene memory and imagination, as well as downstream embodied task performance.

@article{yin2026_3dbelief,

title = {3D-Belief: Embodied Belief Inference via Generative 3D World Modeling},

author = {Yin, Yifan and Wen, Zehao and Ye, Suyu and Chen, Jieneng and Zheng, Zehan and Dai, Nanru and Shi, Haojun and Huang, Aydan and Zhang, Zheyuan and Yuille, Alan and Xie, Jianwen and Tewari, Ayush and Shu, Tianmin},

journal = {arXiv preprint arXiv:2605.11367},

year = {2026},

doi = {10.48550/arXiv.2605.11367},

url = {https://arxiv.org/abs/2605.11367}

}